Investigation into Pornhub offers opportunity to curb proliferation of ‘haunting’ online child sexual exploitation

Anyone with access to a smartphone or computer device can find rape videos and images of child pornography in seconds.

Some of the most popular websites in the world proudly display them, even feature buttons at the top of their pages that say “Rape”.

At the Canadian Centre for Child Protection (C3P), the lines don’t stop ringing.

Each peal of a phone is tied to someone’s desperate cry for help, trying to win a battle they should never have to fight, to stop the constant spread of imagery of their own abuse and exploitation.

This type of content is viewed millions of times an hour, around the world. Victims are revictimized over and over and over.

Intimate images or videos of them, either shared with a former partner, or filmed without their knowledge, or after they were attacked, have ended up on a popular porn site, available for anyone to see.

“We have a tsunami of these victims,” Lianna McDonald, the executive director with C3P, told the Standing Committee on Access to Information, Privacy and Ethics (ETHI) on February 22.

McDonald, along with other top child protection advocates and law enforcement officials appeared before parliamentarians to add their voices to an ongoing investigation into the horrendous, rapidly spreading pandemic of exploitation. One of the major players in the online porn world, Pornhub, was the focus of the hearings. Many of the victims have complaints regarding the site, owned and operated by Canadian parent company MindGeek, and the difficulty in trying to get non-consensual content, including imagery of minors, taken down from the website.

“Children have been forced to pay a terrible price for this,” McDonald said.

Now, federal legislators have an opportunity to address these debased acts that police agencies and child protection organizations across the globe have been trying to fight since the dawn of the internet. The startling rate of proliferation as the universe of the world wide web expands by the second has been too much for authorities to handle.

Far more resources and legislative tools are needed to slow the rampant exploitation of women and children around the world, and right in our own backyard.

The committee has heard from a number of victims who shared similar stories of finding out their intimate images were on the Pornhub website, and the traumatic experience that followed after requesting the parent company take the content down.

It has been a “haunting” ordeal, one said.

Being forced to jump through a series of hoops to prove their identity, having to email the company multiple times when responses went unanswered, or being flat out ignored, was the common theme of the testimony.

All the while, videos of them remained public, being consumed by millions of random strangers in some cases. Pornhub and Montreal-based parent company MindGeek denied almost all of the allegations presented in the evidence, claiming its practice is to remove videos immediately after they are flagged and to use sophisticated electronic systems to ensure they are not reposted. Testimony before the parliamentary committee has cast considerable doubt on these claims.

"Children have been forced to pay a terrible price for this,” says Lianna McDonald, the executive director of C3P.

The Pornhub case has left many MPs in shock. “Haunted”, “disgusted”, “mind boggling”, are just a few of the words used to describe what they heard. The testimony has led to commitments from MPs to hold top officials at MindGeek accountable for what has happened, and calls are growing louder for a full criminal investigation by the RCMP into the company’s apparent failure to report child sexual abuse material (CSAM) to proper authorities for close to a decade, despite a legal requirement to do so. Evidence suggested the company ignored this rule for a decade, until it suddenly began changing its practices when the public outrage began to grow, recently.

For child protection advocates, it’s about time.

“We’ve been screaming from the rooftops that we are long overdue for regulation,” McDonald told the committee. Numerous past reports from the C3P have detailed the extent of the problem. Most recently, in 2019, a document entitled How We Are Failing Children: Changing the Paradigm, described how these crimes are happening at a large scale, right in our backyards and inside the various apps and platforms many access on a daily basis. Yet, the disturbing, taboo nature of sexual abuse keeps it from getting mainstream engagement, and prevents it from getting the attention it needs to effect real change.

Until now.

“Society has the power to demand change,” the report states.

This followed a 400-page study published in the fall of 2017 that told the stories of 150 survivors of child sexual abuse and exploitation.

Through a detailed survey and first-hand accounts, the Centre laid out the horrific reality of fear, shame and guilt that plague survivors and the problems that infect the criminal justice system and hamper attempts to track down and hold perpetrators accountable. The findings of the International Survivor’s Survey are stomach turning.

McDonald told MPs that while Pornhub is the only company currently under the microscope, the same level of scrutiny applied to a number of other internet service providers would reveal similar exploitation of child sexual abuse material. In fact, in some jurisdictions, this is already happening. Xvideos, Pornhub’s largest competitor, is currently under investigation in the Czech Republic for hosting illegal content.

“We’ve allowed digital spaces where children and adults intersect to operate with no oversight,” McDonald said. “There definitely needs to be more than a conversation about how we are going to take the keys back from industry.”

Change can’t happen soon enough. Recent studies and statistics indicate this problem is only growing in scale and complexity, both in the types of CSAM being made available online and the severity of the abuse inflicted on the child victims.

In November 2019, the Virtual Global Taskforce, an international organization made up of police agencies, non-governmental groups and industry partners dedicated to protecting children from sexual exploitation, released its latest Environmental Scan of child sexual exploitation on the internet to identify challenges, threats and new trends being observed by police institutions across the world.

Nearly three quarters of the organizations surveyed reported an increase in the volume of child sexual exploitation material reported to them. The National Centre for Missing and Exploited Children (NCMEC) in the United States received 18.4 million reports in 2018, a 1,572 percent increase from the 1.1 million reports only four years before. It’s a trend that has continued with John Clark, NCMEC’s president telling the parliamentary committee his organization received over 21 million reports in 2020.

In Canada, the National Child Exploitation Crime Centre (NCECC) saw a 566 percent increase in the number of reports received between 2015 and 2018. The Cybertip line, operated by C3P, receives approximately 3,000 tips about suspected CSAM every month.

It amounts to nothing less than an “explosion” of digital platforms hosting illegal pornographic content, McDonald said. It’s not just Canada. There’s been a 57 percent increase in the number of registered web domains showing images of child exploitation, according to WeProtect Global Alliance.

The reality is “heartbreaking and daunting” Clark told MPs, who explained the “tremendous” increase in videos being viewed by NCMEC staff are increasingly graphic, increasingly violent and involving younger and younger children, including infants, in a pay-per-view format. Unfortunately, these trends are not new, and Canada’s politicians should be well aware of this.

As a 2007 report from Canada’s Office of the Federal Ombudsman for Victims of Crime states: “We cannot afford to turn our heads or cover our ears because the problem is growing. And it is getting exponentially worse. Images are getting more and more violent, and the children in those images are getting younger and younger.”

“The trauma suffered by these child victims is unique,” Clark said. “The continued sharing and recirculation of a child’s sexual abuse images and videos inflicts significant revictimization on the child.”

Clark says that there are some technology companies employing significant efforts to combat CSAM on their platforms, including human moderators and technical tools. However, he says too often companies do not act until a concerning level of abusive material has already appeared on the site.

“NCMEC applauds the companies that adopt these measures. Some companies, however, do not adopt child protection measures at all, others adopt half measures or as PR strategies to try and show a commitment to child protection while minimizing disruption to their operation,” he said.

The explosion in reports to both NCMEC and the C3P translates to an increased workload for police agencies across North America who are tasked with investigating the reported abuse. While not every report filed with these agencies will qualify for an investigation, many of them do. In Brampton and Mississauga, the increased workload has overwhelmed the local Internet Child Exploitation (ICE) unit.

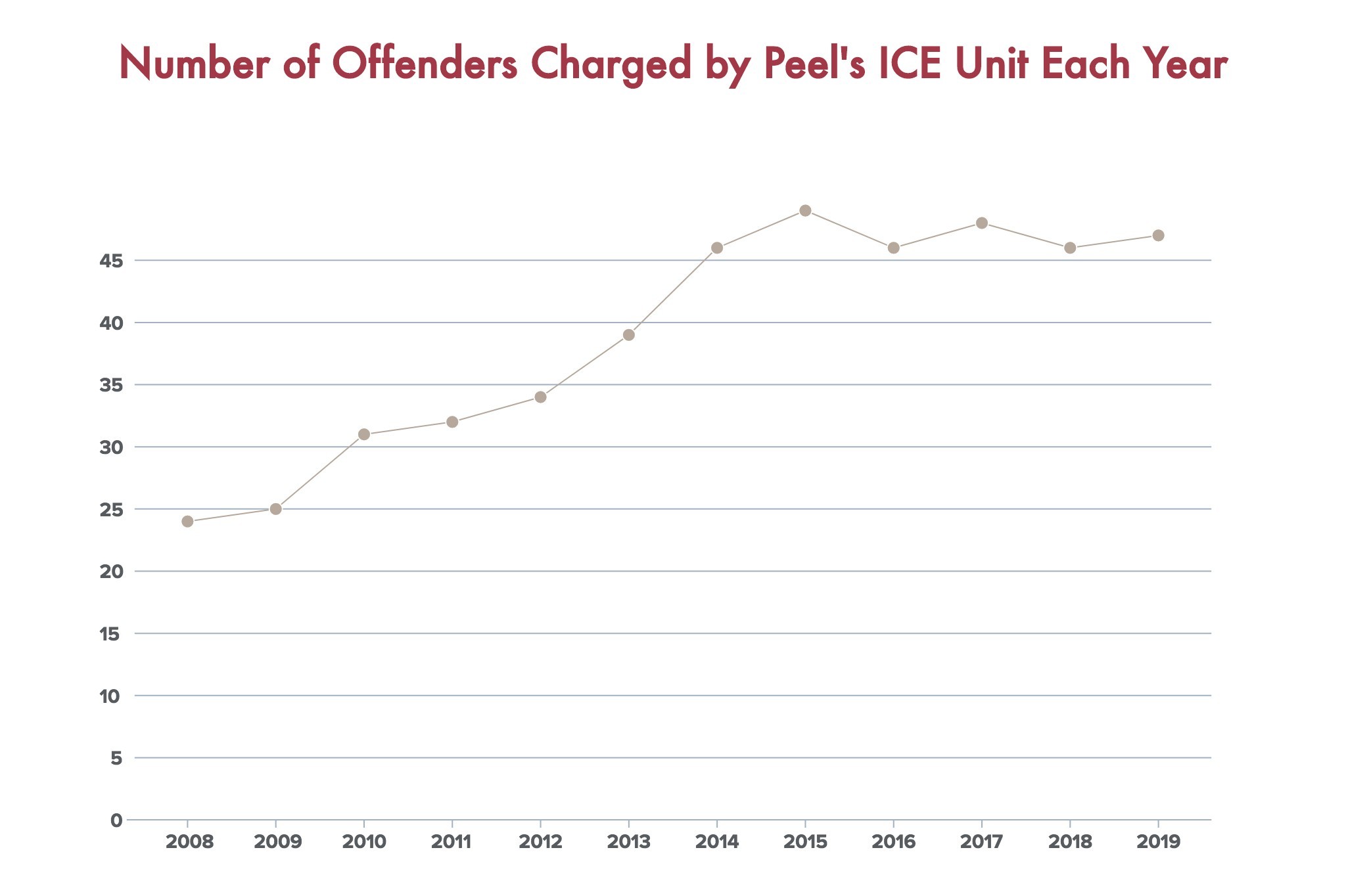

Detective Andrew Ullock, the lead officer in Peel police’s ICE unit, says there has been an exponential increase in the number of cases over the last decade.

Between 2018 and 2019, Peel’s ICE unit saw a 253 percent increase in referrals from the National Child Exploitation Crime Centre (NCECC), operated by the RCMP, which takes reports of potential child sexual abuse material found online and sends them to the police organizations in the jurisdiction of the case. In 2018, Peel received 156 referrals, a number that jumped to nearly 400 in 2019. It’s a trend seen across Canada; NCECC saw a 566 percent increase in the number of reports received between 2015 and 2018.

It isn’t only the number of cases increasing either. As the ease of online sharing and the availability of cloud and external storage options have proliferated over the last decade, the size of CSAM collections investigators are finding have also increased. Last year, the ICE unit reported a 373 percent increase in the amount of evidence seized over the course of three years.

“It’s huge what we’re getting, several terabytes in a single computer,” Det. Ullock told The Pointer’s What’s the Point podcast. “The size of the collections are way bigger because they can get more images faster.”

The increase in evidence means more cataloguing and more background work for officers, meaning cases take longer to investigate and prosecute. Looking at the end result it’s clear Peel’s ICE unit is maxing out its capacity to do the work.

Between 2004 and 2012, Peel’s ICE unit continuously increased its arrest record, from 24 individuals charged to 34. By 2014, the number of apprehensions increased to 46. However, over the last five years, things have flatlined, with 49 people charged in 2015, 46 in 2016, 48 in 2017 and 46 in 2018. In 2019, 47 people were charged in connection to child sexual abuse material investigated by Peel’s ICE unit.

The figures do not illustrate a plateauing of demand, they represent the stagnating resources, despite the need to hire many more officers to tackle a mountain of evidence flowing in each month.

This year marked the second time in the last decade that an officer was added to the unit, bringing the total number of investigators to eight. Meanwhile, the number of referrals Det. Ullock has received from the NCECC over the last year alone has doubled.

“In some ways we’ve made great progress, in others we’ve fallen behind,” he says.

For Ullock, it’s fairly easy to trace the proliferation of this material in the technological age. A large inflection point came with the introduction of digital cameras.

Prior to the ease offered by digital photography, someone looking to produce CSAM would have had to either take these illegal photos or videos into a store or specialty shop to be developed, edited and processed, which was an obvious risk. Few had darkrooms or editing and production suites of their own.

Now, everything is in the palm of someone’s hand.

Digital cameras create an easier method for both creating and digitizing these images. Then, with a few swipes, they can be uploaded and instantly transmitted around the globe.

Combine this with the growth of the internet itself, increased connectivity, lower barriers to access, with the proliferation of smartphones that host constantly improving camera and video features, and how easy it has become to share files, even of significant size, all of it makes for a perfect storm. Caught in the middle of this tornado of technology are the children preyed upon by pedophiles and those motivated to amass large collections of this abhorrent material.

Profit, power and sexual gratification are their motives.

It’s easier to create, easier to share, easier to remain anonymous online with the help of technology like encryption and VPNs, and the internet provides a direct avenue for someone to justify their deviant sexual interests with others who feel the same way.

“In the past, someone who had a sexual interest in children, that person was isolated, surrounded by people who they thought disagreed with them. Well now they can literally find thousands, millions of people who think the same way as them and it validates them,” Det. Ullock explains. “To top it all off, throw in a pandemic that forces everyone to stay at home and use computers and be online and you’ve got a perfect storm.”

So why is nothing being done?

Governments across the globe have known about this problem for decades, the VGT was formed in 2003 with the explicit goal of fighting online child exploitation, the Government of Canada announced its national strategy to tackle the problem in 2004, but in the years since, it appears to have only become easier to exploit a child online.

When asked why little had been done to address Pornhub and the many complaints that have come forward from victims about the site, RCMP C/Supt Marie-Claude Arsenault, who chairs the VGT, noted that in some respects, the hands of law enforcement are tied when it comes to prosecuting these crimes. In some cases there is little evidence available and in others there are questions of jurisdiction if a company’s servers are located in another country.

Det. Ullock admits this is a problem Peel police’s ICE unit has also experienced.

“I have no ability to compel a company in the United States or anywhere else in the world for that matter to follow any investigative orders,” he said, while also noting that “generally we get compliance.”

NDP MP Charlie Angus was clearly not impressed with the response from law enforcement officials during the hearings, later tweeting “I don’t know what is more shocking: that Pornhub ignored mandatory reporting law for informing police about allegations of online child porn or the fact that RCMP never bothered to ask why and have zero investigations.”

NDP MP Charlie Angus

When asked by The Pointer about any future investigations, the RCMP was noncommittal.

“In 2018, the RCMP sought to discuss the Mandatory Reporting Act with Mindgeek, and the obligations contained therein. Mindgeek informed the RCMP that, based on advice from their legal counsel, they would be reporting to the National Center for Missing and Exploited Children (NCMEC) in the United States. As a global company that is registered abroad, jurisdiction over Mindgeek is difficult to determine, as content is hosted outside of Canada,” their response states.

“Every report received by the NCECC is assessed upon intake and prioritized based on a number of factors, including a child at immediate risk or other time sensitive factors. Reports are further actioned and investigated within NCECC when the information received points to a child pornography offence being committed. For instance, the NCECC has a unit dedicated to identifying victims and removing them from harm. The NCECC conducts investigations which are then referred to law enforcement agencies of jurisdiction within Canada or internationally for further action. Online child sexual exploitation cases are investigated by police services of jurisdiction in every province. However, the RCMP often work closely with police services across Canada and abroad to assist with these investigations.”

A global effort was made in March of last year in an attempt to improve the practices of big tech companies across the world. The Five Eyes, a law enforcement intelligence alliance between Canada, Australia, New Zealand, the United States and the United Kingdom, released a set of voluntary principles for tech companies to follow to better prevent, target and eliminate CSAM from their platforms. The uptake of these principles is not encouraging.

Last week, the Phoenix 11, a survivor’s advocacy group released a statement slamming the global effort to tackle this problem.

“It seems this has not been taken seriously, especially at a time where reports of online child exploitation are at an all-time high, driven by pandemic restrictions world-wide,” the statement reads. “With more children being at home and online, they are at risk now more than ever. We know this better than anyone, and it worries us greatly. It shows us that industry does not view this as a priority, does not understand the urgency, or both.

“We are also asking the 5 Eyes what other tech companies have supported the Voluntary Principles? It appears that only a handful have adopted them since the announcement 12 months ago — this is unacceptable. What pressure is being placed on the hundreds of tech companies that have not supported the Voluntary Principles? What excuse could there be for not supporting these principles? One year ago, industry pledged to do better, and we are still waiting.”

Email: [email protected]

Twitter: @JoeljWittnebel

COVID-19 is impacting all Canadians. At a time when vital public information is needed by everyone, The Pointer has taken down our paywall on all stories relating to the pandemic and those of public interest to ensure every resident of Brampton and Mississauga has access to the facts. For those who are able, we encourage you to consider a subscription. This will help us report on important public interest issues the community needs to know about now more than ever. You can register for a 30-day free trial HERE. Thereafter, The Pointer will charge $10 a month and you can cancel any time right on the website. Thank you.

Submit a correction about this story