Predators and a pandemic: parents urged to protect children as online exploitation projected to rise

Warning: Some material in this story describes graphic sexual abuse and may be disturbing to some readers.

Inside the “categorization room”, some of the hardest work happens.

In a world awash with pornography, Peel Police’s Internet Child Exploitation (ICE) Unit officers confront a hard to fathom amount of material. While most online porn is legal and involves the consumption of content created by consenting adults, the demand for pornography is hard to comprehend.

GuardChild, an online organization committed to protecting children from exploitation has gathered data from government and private sector sources. It shows that 90 percent of children between 8 and 16 have consumed online pornography; 22 percent of teenage girls in North America have posted nude or semi-nude images of themselves; and 86 percent of girls report conducting online chats without their parents’ knowledge.

The hugely popular website Pornhub, owned by a parent company with headquarters in Canada, reported it received 33.5 billion visits in 2018 and almost 6 billion hours of pornography were viewed on its site in 2019.

The company is now at the centre of a growing movement to stop entities like it from providing the oxygen that child exploitation depends on.

It is another out of control pandemic doing incredible harm across our planet.

Inside the categorization room, officers in Peel police’s ICE unit sit behind closed doors and sort through volumes of awful imagery scraped from cellphones, computer hard drives and cloud storage accounts belonging to offenders charged with possessing or viewing child sexual abuse material.

The “categorization” involves judging varying degrees of vile.

Peel Police's ICE unit is responsible for viewing and sorting through child sexual abuse material involving perpetrators and victims in Peel Region (Photo illustration).

In a legal sense, the sorting is meant to determine which of the images cross the threshold and can be described as “child pornography” under the Criminal Code, meaning it shows a person who is under the age of 18 engaged in explicitly sexual activity.

Yet each image seen is of a child who has been abused to some degree, and the officers tasked with analyzing it must develop strong personal coping mechanisms to handle the deeply disturbing images.

The COVID-19 pandemic has changed this difficult, but vital work in many ways. Previously, it would not be uncommon to see several officers inside the categorization room at one time. The volume of child sexual exploitation material (CSEM) seized and forwarded to the Peel ICE unit has increased dramatically over the last several years.

Now, Det. Andrew Ullock, the head of the close-knit group explains officers need to limit their numbers inside the room to follow physical distancing protocol.

Workspaces are routinely sanitized, schedules have been spread out or staggered to limit the number of bodies in the office at one time and new measures have been implemented during interviews so appropriate distances can be kept.

The work of the ICE unit can not stop. Over the last five years, Mississauga and Brampton have registered a 332 percent increase in sex crimes against children, rising from 38 crimes in 2014 to 164 in 2018, nearly three times the increase observed across Ontario.

Tips from the National Child Exploitation Coordination Centre (NCECC) about either child sexual exploitation material being viewed or originating in Peel police’s jurisdiction have increased 543 percent since 2015, ballooning from 28 reports that year to 180 in 2018.

When The Pointer met with the ICE unit in September 2019, Peel had already surpassed the previous year's total with 184 reports at that point in the year. Things have only gotten worse.

Peel police ended 2019 with 396 referrals from the NCECC, meaning between 2015 and the end of last year, potential cases of Peel children being exploited online have increased more than 1,300 percent.

And now, a perfect storm might worsen this horrific criminal activity.

“It’s huge,” Ullock says. “People are moving more and more of their lives online so that gives more opportunities to commit offences online and of course, child exploitation offences are included in that.”

In the same way traffic collisions have decreased during the pandemic – less cars on the road means less chance they will run into each other – the inverse is true for online offences like child sexual exploitation.

Ullock says Canada’s Centre for Child Protection has already warned police forces across the country to prepare for an influx of new cases due to the pandemic. With kids stuck inside, and many of them turning to the internet and video games to both connect with their friends and escape from the real world, it’s more likely they could run into an adult predator lying in wait, an adult predator who is also stuck at home and perhaps out of work with extra time on their hands.

“I couldn’t think of a better way to create conditions to cause this to increase,” Ullock says. “There’s more kids online more often and more predators online more often, it’s inevitable that they’re going to intersect with one another and when they do there’s going to be offences.”

Stranger Danger

We teach our children about the potential threat from shady characters at the park or the shopping mall from a very young age. The consequences of not heeding the “don’t take candy from a stranger” warning have been drilled into kids, creating an innate fear of strange men driving big vans.

While these types of predators are still patrolling the streets, there’s a much more common, effective predator lurking in the digital world. They’re often hiding in plain sight. But parents who weren’t born digital natives aren’t attuned to the world these offenders inhabit, the world of their children.

Being exploited online is not a new crime. As far back as the early 2000s, criminals have been finding ways to exploit others online. According to Polaris, a U.S. organization dedicated to ending human trafficking and modern slavery, they have seen a myriad of different apps and social media platforms implemented by traffickers to find victims.

“Online recruitment has existed for as long as there has been widespread access to internet platforms,” reads the organization’s 2018 report On-Ramps, Intersections and Exit Routes. “Sex trafficking survivors who attended Polaris focus groups and who were trafficked in escort services, outdoor solicitation, remote interactive sexual acts, pornography and in strips clubs and bars, even discussed their common experience of being recruited on MySpace in the early-mid 2000’s.”

While MySpace may be a little outdated for the Gen-Zers, the platforms they know, love and visit every day are also infected with the same problems.

Between January 2015 and December 2017, Polaris, which also operates the United States Human Trafficking Hotline, recorded 845 potential victims recruited using internet platforms, including 250 potential victims recruited from Facebook, 120 through online dating sites, 78 through Instagram, and 489 through other online platforms.

Whether it’s a teenager meeting a trafficker through social media, or a young child being tricked into sending sexually explicit images to someone, the potential for these crimes to persist speaks to a significant, ongoing disconnect between parent and child.

According to a study completed by Common Sense Media last year, about 1 in 5 children have a smartphone before the age of 8, 53 percent of kids in the United States had a smartphone before the age of 11, and 84 percent of teenagers have their own phones and are spending considerably more time with their eyes locked on the screen.

While games and YouTube videos are seen as a harmless way to keep children occupied – especially when locked inside during a pandemic – and social media may be a good way for teens to stay in touch with their friends, both can pose significant risks that many parents fail to understand.

Smart phones offer a wealth of opportunity and knowledge, but also all of the dangers and threats that come with having an unknown world at your fingertips.

For parents who did not grow up with a smart phone in their hand, more worried about the man with candy in a van near the neighbourhood park than the one on the other side of a monitor, these threats can be hard to grasp. But to protect their children, it’s critical they change that.

“You can’t supervise it unless you know how it works,” Ullock says.

Facebook, Instagram, Snapchat, Tiktok; apps that parents hear their kids talking about all the time, all with different purposes and operating systems, all have different ways for potential exploitation to go undetected. It seems like a daunting task, but Ullock says it starts with laying a foundation of basic guidelines.

Number 1: “The parent should always have ultimate control of the device.” Especially at night. It’s 2 a.m., do you know who your children are talking to?

Number 2: “The parents need to approach this more as guidance.” This is very important.

It’s not a matter of setting up rigid rules for the internet. Setting strict rules can actually have the opposite effect of what parents want. You want your child to come to you if there is a problem. However, if they feel like they broke one of your “rules” they may hide what is going on for fear of getting in trouble. This plays right into the hands of the predator.

“We see that sometimes where something has been going on for around a year, and [you ask the child] why didn’t you say something?” [And they say] ‘Well I was told not to do this and I figured I’d be in trouble,” Ullock says. “They need to know if they run into a problem, at the earliest time they know it’s a problem, they need to go to mom and dad, and say, I need help.”

These are not easy conversations to have. For young children and teenagers entering puberty, talking to their parents about anything sexual is the pinnacle of embarrassment and awkwardness. It can be difficult for parents as well, but to save them from years of potential torment and repetitive trauma, these conversations are essential.

“Not talking about it doesn’t mean it’s not happening,” he says.

To start, Ullock advises approaching the situation on mutual ground. It’s not a matter of “do this or don’t do this”, but trying to educate children about the dangers that exist, and stressing that this is about protecting them. Resources to assist with such conversations can be found on the Peel Regional Police website, through the Canadian Centre for Child Protection and from the Ontario Provincial Police.

Having these difficult discussions with our children is crucial. As one Ontario Court justice explained last year:

“Once images or videos are circulated, the degradation of these children becomes both permanent and global. Images once distributed through [an] informal network can never be truly eliminated from circulation. The harm is both acute and perpetual. The growing numbers of victims — most unidentified – suffer from wounds that are continually re-opened and harm that can manifest itself over decades.”

Such harm is a parent’s worst nightmare, and can be prevented.

While the exploitation of children online is not a new crime, societal awareness of the issue, the consequences, and what can be done to eradicate the scourge, has taken years to evolve.

The impact on families is unimaginable.

In the early 2000s, 15-year-old Amanda Todd met a man online. After some flattering conversation, she flashed him. The man screen-captured the image and used it to try and illicit further “shows” from her. When she refused, he used it to blackmail her, sending the image around to her friends repeatedly, even as she switched schools. In 2012, Todd hung herself at her home in Port Coquitlam, B.C.

A screengrab from a YouTube video posted by Amanda Todd describing the harassment she faced. Todd killed herself in 2012.

In 2011, 15-year-old Rehtaeh Parsons attended a party with some friends where she was reportedly raped by four teenage boys. An image of the rape was captured and widely circulated around her high school. After seventeen months of torment, she hung herself.

These tragic deaths, and countless others, have spurred national and international conversations about cyberbullying and the legislative measures that are needed to protect children on the internet.

Following the suicide of Parsons in her native Nova Scotia, the province pushed through new legislation to create stricter rules and punishments for the sharing of these types of images. The Supreme Court struck down the law, stating it infringed on allowances laid out in the Canadian Charter of Rights and Freedoms.

This is a perfect example of the significant difficulty the internet poses for lawmakers trying to legislate a space as abstract as the internet.

If you build a school, or a mall, or even a public park, rules can be created to govern that space to avoid chaos. The internet is not such a defined space, and in many cases, companies will create these online worlds, with complete disregard and zero accountability for what happens inside them.

Children often fail to grasp they are entering spaces with next to no rules and the dangers once inside pose significant risk.

The thought that someone on the other side of the world would want to use them for sexual gratification – something else they may be too young too even completely understand – is beyond their sphere of comprehension.

Parents and legislators need to ensure proper protections are in place. This is exceptionally difficult as the internet is ever-changing, and, like the endless sprouting heads of a hydra, new apps that connect to it continue to pop up. Getting a handle on the constantly expanding problem is a Herculean task.

The police and other criminal justice agencies are now focussing on the online spaces where abusive and illegal content is hosted and attention has fallen on companies operating the websites, or the Internet Service Providers (ISPs), who, since 2011, have been mandated to inform law enforcement whenever they become aware of material containing child sexual abuse on their servers.

This has been met with varying degrees of success. While the federal government and law enforcement officials will say companies have come a long way from where they were ten years ago, there are still glaring examples of how websites fail to address these significant problems.

In the same way that Facebook has been forced to adapt to the spreading cancer of fake news infecting its platform, many other video sharing sites, like YouTube, have had to enhance screening procedures to catch harmful material before it’s public.

In March, the federal government, along with other global partners, released a set of “voluntary principles” for companies to follow to better address the increasing prevalence of this material online. It’s a good step, but it’s not clear if these principles will go far in reaching the companies who need to hear it most, and it’s unclear if voluntary action will have any real impact.

The Pornhub Problem

Pornhub, one of the world’s largest sources for pornography on the internet, is facing a massive backlash for hosting videos and images depicting real rape (as opposed to that which is staged and fetishized in some forms of pornography), child abuse, and human trafficking victims on its website, and allegedly doing very little to remove this content from circulation, even after pleas from the victims depicted in the content who have asked to have it removed. Pornhub denies these allegations.

Laila Mickelwait, the director of abolition with Exodus Cry, an organization dedicated to ending exploitation in the commercial sex industry and aiding victims, is the driving force behind the Trafficking Hub campaign which is pushing to get Pornhub shut down and for its executives to be held accountable. The petition on Change.org is closing in on 1 million signatures; a significant milestone.

“It is very encouraging that even in the midst of a pandemic, where there’s so much concern about a variety of important issues, that this is one that has taken precedent for many people,” she told The Pointer. “There is a spectrum of serious violations and abuses that are taking place on Pornhub and people are beginning to realize that the site is infested with the rape, exploitation and trafficking of women and children. Attention is being brought to this problem and that is a positive step in the right direction because this has been allowed to go unchecked for too long. It's time to get things back in order and to hold the company accountable.”

Laila Mickelwait is the driving force behind the Trafficking Hub campaign.

If the stories weren’t widely reported, they would be hard to believe.

In October 2019, a 15-year-old girl in Florida, missing for nearly a year, was located by police after her mother spotted images and videos of her on Pornhub.

There’s the story of Rose Kalemba, who was taken at knifepoint about 10 years ago and raped for 12 hours when she was 14-years-old. The videos were posted to Pornhub. Kalemba pleaded with the company to take the videos down, but was ignored. Only after posing as a lawyer did Pornhub take down the video.

There are widespread reports detailing the woman's allegations, including in the BBC and the UK Guardian.

In January of this year, a judge ordered a California company to pay $13 million to 22 young women who filed a class action lawsuit saying they were tricked into doing pornography. The ruling states that the company behind the GirlsDoPorn videos earned millions of dollars by fooling the women, many of whom believed they were responding to ads for modelling jobs.

The owners of the video channel are also facing criminal charges for sex trafficking. Despite promises to remove them, and the proven evidence that these videos depict the abuse and rape of human trafficking victims, GirlsDoPorn videos have still been found on Pornhub. A spokesperson for Pornhub told The Pointer that the GirlsDoPorn videos do not remain on the website.

These are just three of the countless examples of such material that has found its way onto Pornhub. An investigation by the Internet Watch Foundation confirmed 118 instances of children being abused featured on PornHub, half of which were classified as Category A level abuse – involving either penetration or sadism.

It remains unclear if Pornhub, or its parent company MindGeek, has improved its systems to better flag and remove this content. Pornhub has said in previously released statements that it is committed to removing this content from its websites and implements software tools to do so.

“Pornhub has a steadfast commitment to eradicating and fighting any and all illegal content on the internet, including non-consensual content and under-age material. Any suggestion otherwise is categorically and factually inaccurate,” a Pornhub spokesperson told The Pointer. “Our content moderation goes above and beyond the recently announced, internationally recognized Voluntary Principles to Counter Online Child Sexual Exploitation and Abuse.”

The Pornhub spokesperson explained the site has “state-of-the-art, comprehensive safeguards” across its platforms to remove this material that breaches its policies.

“This includes employing an extensive team of human moderators dedicated to manually reviewing every single upload. This allows us to take proactive action against illegal content.” the spokesperson said. “In addition, we have a robust system for flagging, reviewing and removing all illegal material, and age-verification tools.”

These tools include automated technologies that use photo and video “fingerprinting” that is able to detect material that has been previously flagged as containing child abuse or illegal activity and prevent it from being reuploaded to the platform.

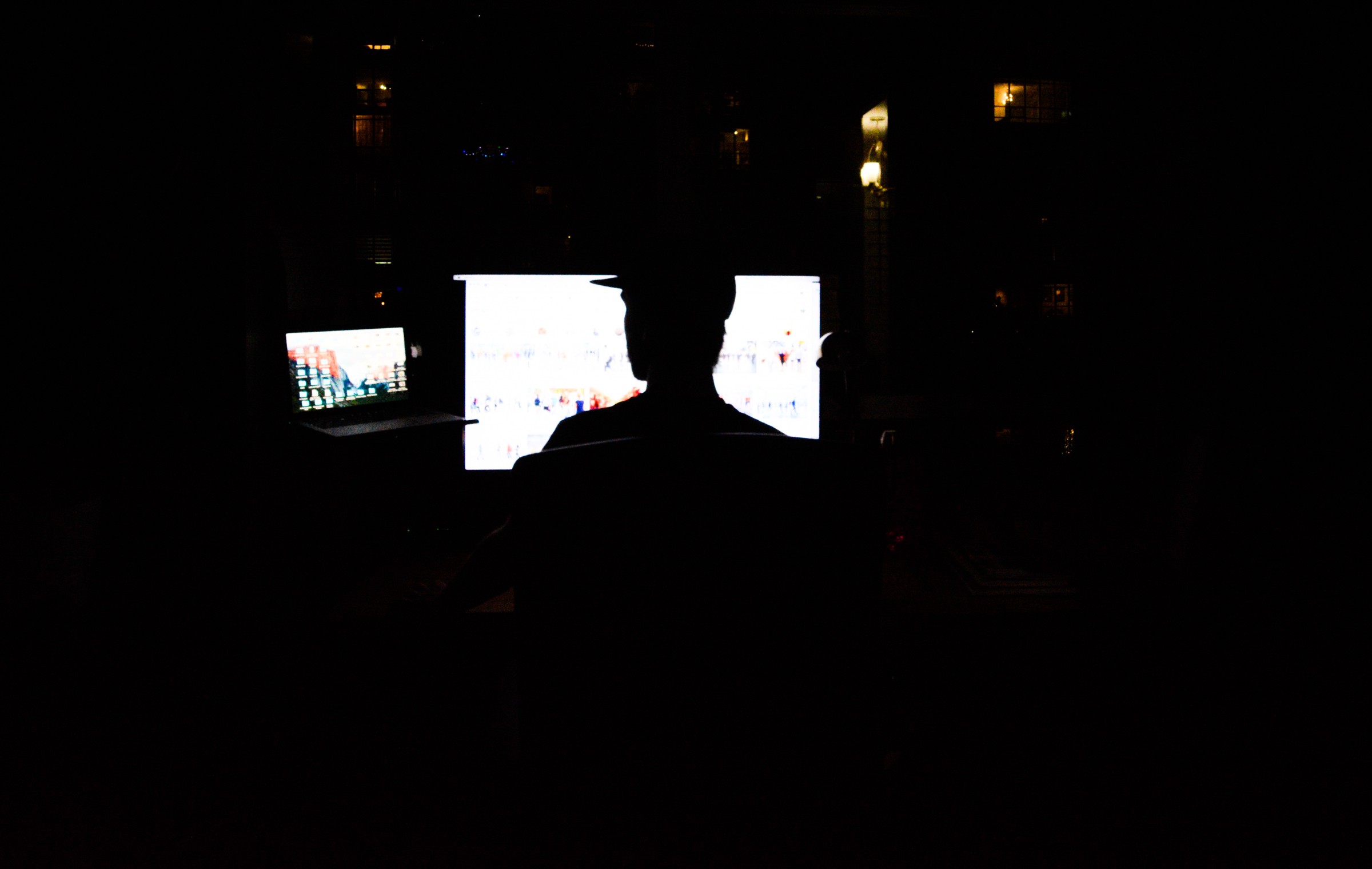

A similar software is used by the Canadian Centre for Child Protection’s Project Arachnid, which aims to remove child sexual abuse material from the internet.

These programs have been met with varying degrees of success. For instance, an investigation done by Vice/Motherboard earlier this year found that it is quite simple to fool Pornhub’s fingerprinting software by making simple edits to an already flagged video.

“It is Pornhub’s priority to ensure a safe, inclusive and diverse online community that is free from abusive and illegal content,” reads a statement on the company’s website.

The Project Arachnid software, run by the Canadian Centre for Child Protection, sweeps the internet for known child sexual abuse material and prevents these images and videos from being shared repeatedly.

The company states it has a “zero-tolerance policy toward sexual abuse material” on the platform and says it reports cases of apparent child sexual abuse to the National Centre for Missing and Exploited Children.

The Pornhub spokesperson also referred The Pointer to a previous statement the company made about Exodus Cry, the group behind the Trafficking Hub petition, calling it a “radical rightwing fundamentalist group.” In the same interview, provided to a media outlet in March ahead of the International Women’s Day Protest, the spokesperson said Exodus Cry’s founders “have long vilified and attacked LGBTQ communities and women’s rights groups, aligned themselves with hate groups, and espoused extremist and despicable language.”

Exodus Cry dismisses the allegations as “completely false.”

“Pornhub's completely false accusations and ad hominem attacks are reflective of the fact that they have no legitimate defense for the pervasive rape, trafficking and exploitation of women and children they profit from,” Mickelwait says.

“This petition and movement to hold Pornhub accountable for these crimes is backed by over 300 of the world's most respected child protection and anti-trafficking organizations. Almost one million people from 192 countries from all backgrounds – christians, atheists, liberals, conservatives, even satanists have endorsed the campaign to shut down Pornhub for its complicity in sex trafficking.”

Mickelwait states that even those within the porn industry have pushed back against Pornhub and MindGeek for cashing in on the “exploited and assaulted bodies of the most vulnerable in society.”

“If false, childish name calling is the best defense that Pornhub has, they are clearly in a great deal of trouble. An apology to its many victims would be a much better look for them, but instead they gaslight victims and block them on social media for calling out their abuses.”

Such sites create more demand for exploitative content and without them many who commit crimes might have no outlet for the material they create.

“Pornhub has an important responsibility, a duty to be vetting and verifying reliably that those who are in the videos it hosts and monetizes for profit are not trafficked, raped and exploited women and children. That has not been done and it’s not being done, and these are basic common sense expectations.” Mickelwait says, noting that PornHub does not appear on the National Center for Missing and Exploited Children's list of electronic service providers that had reported abusive material in 2019.

Along with the growing petition, this issue also garnered attention earlier this year, when a protest was held outside the Montreal offices of MindGeek on International Women’s Day calling for Pornhub to be shut down. The next day, a number of MPs sent a joint letter to Prime Minister Justin Trudeau requesting the federal government take action to investigate the claims against Pornhub hosting illegal material and the companies failure to remove this content when asked to do so.

The Trafficking Hub campaign has garnered nearly 1 million signatures calling for Pornhub to be shut down.

“The ability for Pornhub, and other online companies, to publish this content, and in some cases to profit off crimes committed against children, victims of sex trafficking and sexual assault, is fundamentally contrary to any efforts to increase gender equality in Canada and protect women and youth from sexual exploitation,” the letter reads.

“The Government of Canada has a responsibility to ensure that people who appear in sexually explicit content that is uploaded and published online by companies operating in Canada are not children, nor victims of human trafficking or sexual assault. Further, the Government of Canada has a responsibility to investigate those who produce, make available, distribute and sell sexually explicit content featuring victims of child sexual exploitation, sex trafficking, and sexual assault.”

Signed by a trio of Senators and six MPs from different political parties, the letter is looking for the government to: review existing legislation for areas that could be strengthened, ensure that MindGeek is in compliance with existing legislation, and “take whatever other steps are necessary at the federal level to ensure that companies that sell, produce, make available or publish sexually explicit content be required to verify the age and consent of each individual represented in such material.”

A similar call is also being made in the United States, where the Department of Justice has been asked to investigate the website.

The Peel Regional Police have had their own run-ins with the porn giant. As Ullock explains, Peel police have had to approach the company about removing content several times, most of which have resulted in the content being removed.

However, attempts made with some site operators are not always successful.

“At the end of the day, I’d like to tell you that we can get everything removed, but it’s the internet, no one is in charge of the internet. We definitely do what we can and we definitely understand victims’ concerns,” Ullock states. “Usually it works, but I can’t claim 100 percent success with it.”

The shuttering of a website by law enforcement is not without precedent. In 2018, United States government shutdown Backpage, a classified advertising website that was a hub for human traffickers looking to sell their victims online. The CEO of the company later plead guilt to money laundering and prostitution related charges.

Outside of law enforcement, public pressure has also shown itself effective in getting companies to react. In 2010, Craigslist bowed to public pressure and shuttered the “adult” portion of its website after complaints that sex traffickers were using the platform.

In Pornhub’s case, it may only be a matter of time. Last year, Unilever received backlash for advertising on the site and immediately stopped. The popular Dollar Shave Club has stopped working with the company.

Mickelwait says she’s hopeful the goal of the Trafficking Hub will be accomplished.

“The only reason why there have not been legal ramifications so far is that there hasn’t been the awareness, there hasn’t been the public outcry and there hasn’t been the political will to do something about it, but that is all changing now,” she says.

In August 2019, the Ontario Provincial Police (OPP) carried out the successful Project Peacehaven, targeting predators looking to lure underage children online. The results were staggering.

In just 72 hours, officers spoke online with 36 suspects – or two predators every hour – with approximately 65 offences committed during that time.

Eight people were eventually arrested. Of those apprehended, six of them drove to meet the “child” for sexual purposes, one of whom drove nearly 300 kilometres to meet a victim who had been fabricated as part of the sting.

“Quite often, we have offenders from different countries,” states Detective Sergeant Brian McDermott, the lead officer on the project, in a press release. McDermott notes in a previous investigation, officers arrested an individual who traveled from Ontario all the way to Florida in order to sexually assault a child.

This is indicative of a disturbing trend observed around the world, as the internet has allowed predators to meet, connect and exchange material.

“That’s one of the things that absolutely concerns me and has for the last 10 years, is that child exploitation material is a type of currency online,” Ullock states. “Sometimes, they say it’s got to be something that you created. So in other words, go out and abuse a child to join this group.”

The cross-border nature of this crime means police organizations from across Canada and North America, and even those overseas, need to work together to try solve these complex crimes.

In the same way that officers have adapted to the new normal of physical distancing around the office, they need to be just as versatile in their efforts to catch these predators.

“We have to adapt. We don’t have a choice, we have to come in to work, we have to keep the public safe,” he says. “Whatever problems come up we’ll find solutions, we’ll figure it out.”

The constant barrage of disturbing images can be too much for even the most highly trained officers to handle. The duties of those in the ICE unit are labelled as high risk and Peel Regional Police have strong mental health supports in place to ensure they can continue to carry out the vital, but deeply disturbing work.

Ullock, who worked with the unit from 2010 to 2017 before heading out on the road as a patrol sergeant for two years, knows how tough, but also how valuable the work can be.

“There are challenges, there are hard days, there are cases that without question I still remember and still have an impact on me,” he says. “It’s important work and other officers I know who have gone on to do other things, they don’t forget, they never forget the work that they did in here and they feel it’s some of the most important work that they ever did.”

Email: [email protected]

Twitter: @JoeljWittnebel

COVID-19 is impacting all Canadians. At a time when vital public information is needed by everyone, The Pointer has taken down our paywall on all stories relating to the pandemic to ensure every resident of Brampton and Mississauga has access to the facts. For those who are able, we encourage you to consider a subscription. This will help us report on important public interest issues the community needs to know about now more than ever. You can register for a 30-day free trial HERE. Thereafter, The Pointer will charge $10 a month and you can cancel any time right on the website. Thank you.

Submit a correction about this story