Privacy vs. safety: Peel police struggle with use of controversial facial recognition technology

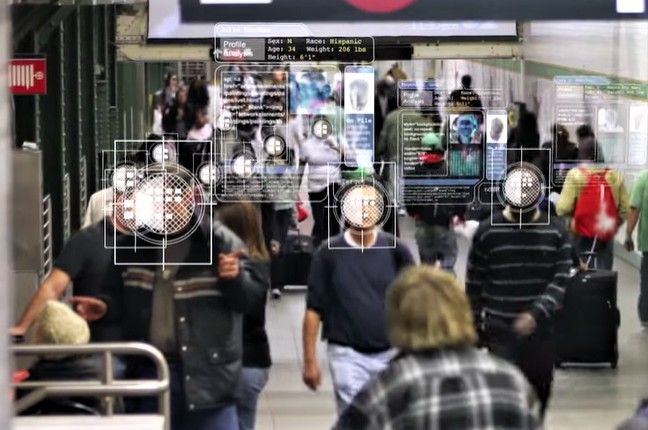

The use of facial recognition software globally, mining billions of photographs from online images that were never intended to be used by law enforcement agencies, is raising some very controversial arguments that pit public safety against privacy and civil rights.

Last week, the Peel Regional Police (PRP) admitted to having used facial recognition software. After the matter became public, a PRP spokesperson told The Pointer it was only for “testing purposes” and further usage of the software is halted until a full evaluation of the technology is undertaken.

The technology is now widespread as a verification process, just like a social media user who tags faces in a photo. For law enforcement, facial recognition software has become an indispensable tool for identifying a potential suspect. Where a police investigator in the past had to manually sift through hundreds of pages of grainy images, the new tech makes the once painstaking and mundane process much more manageable. The technology is also remarkably accurate.

The big problem: until a recent New York Times article, it was being used without the public’s knowledge. In Toronto, the police chief was in the same situation as most of those his force serves — unaware that his officers were using the groundbreaking, but highly controversial technology.

In January, a front-page New York Times article revealed that a secretive American company called Clearview AI was helping more than 600 law enforcement agencies match photos of unknown people with their online images, two of which included the Toronto Police Service and Peel Regional Police. Clearview AI can identity people from a database of three billion images, scraped from websites like Venmo, Facebook and Youtube. This is a dramatically higher number of images than is currently available on other facial recognition databases, including those previously used by police forces.

Using facial recognition software, dated photos can be reanalyzed in the hopes of locating a person who’s been declared missing for years, possibly solving a decades-old cold case in the process. If there's footage of someone breaking into a home, police can and do make use of facial recognition software to cross reference databases of mugshots with billions of images that are being scraped from a wide range of online platforms that feature heavy amounts of user-initiated photos. In a relatively short amount of time, an investigator can ID a possible suspect.

While law enforcement agencies enthusiastically embrace the technology, data privacy researchers are urging caution. What’s more, they are very concerned police don’t have the necessary tools or understanding to effectively evaluate issues surrounding what is still a new technology.

Facial recognition technology concerns were brought to light last week in Southern Ontario, with police forces acknowledging — and in the case of Toronto Police Services initially denying — the use of the software developed by Clearview AI.

The company’s shadowy existence was brought into the public eye thanks to a recent article by the New York Times, which revealed that billions of images had been “scraped” by Clearview from sources like Google and Facebook to create a searchable database which had been shopped to law enforcement.

Use of the contentious facial recognition software was acknowledged by a PRP spokesperson.

“A demo product of the Clearview AI software was provided to Peel Regional Police for testing purposes only, however the Chief [Nishan Duraiappah] has directed that testing cease until a full assessment is undertaken pursuant to a concurrent ongoing facial recognition review project,” wrote Peel Constable Kyle Villiers in an email Tuesday.

PRP Chief Nishan Duraiappah

“Our review team is working closely with the Information and Privacy Commissioner’s [IPC] Office as well as other police organizations to ensure any future application of facial recognition technology is in keeping with privacy legislation, guidelines and contemporary standards.”

Villiers did not disclose whether or not PRP had sought the approval of its civilian oversight board before proceeding with the testing.

“At this point, I am not able to provide an answer to that question with the information I have been provided,” said Constable Villiers.

None of the police board members responded as to whether or not they were aware of the Clearview testing, and no agenda items or documents were found indicating a debate or update on the use of the technology.

A spokesperson for Mississauga Mayor Bonnie Crombie, an elected official who sits on the seven-person board, declined comment on the matter and referred questions back to the board.

Information and Privacy Commissioner Brian Beamish also declined comment on the matter, referring instead to a recent public statement advising law enforcement agencies to stop using Clearview.

“We’ve learned through recent media reports that other police services may also be using Clearview AI. They should stop this practice immediately and contact my office. I’ve also asked my staff to contact those we’ve become aware of through the media to discuss the legality and privacy implications of their use of this technology,” says Beamish in the statement which is posted on the provincial agency’s website.

A key concern of watchdogs and researchers when it comes to law enforcement agencies using powerful facial recognition software like Clearview is the potential for racial bias.

As past police data shows, Peel Police officers made nearly 160,000 street checks over a five year period (2009-2014), demanding valid credentials from individuals who were not suspected of any crime. Of that number, close to a quarter were Black.

According to the data, Black people were three times more likely to be “carded” than white people. And a 142-page report in 2019 on Peel Region’s diversity practices found in the case of police, as recently as 2017, just 20 percent of total uniformed officers were visible minorities.

Given past and more recent attitudes of police leadership who have strongly denied the presence of institutional racism within the force, there are significant concerns that wider application of facial recognition technology will only inform pre-existing biases on race and lead to targeting of innocent people.

“Right now, we don’t have any independent assessments of how accurate or inaccurate these tools are,” said Chris Parsons, a data privacy researcher with The Citizen Lab, which is run out of the University of Toronto’s Munk School of Global Affairs and Public Policy. “But we do know generally that facial recognition technology quite repeatedly has false positives and false negatives," errors made by the system which may either wrongly identify the subject of an image or rule them out.

Chris Parsons, a data privacy researcher with The Citizen Lab

While facial recognition technology has been much more widely embraced in other countries, privacy legislation protections have been able to limit its use in Canada, including the ability of officers to search through pre-existing police databases, like mugshots. Even then, police may have to apply for judicial permission or have legitimate reasons for doing so, said Parsons.

But just because there are protections in place, said Parsons, it doesn’t mean law enforcement haven’t already been engaging in a potentially unconstitutional form of surveillance that potentially intrudes upon the rights and privacy of unsuspecting people.

“We know in the Canadian context, broadly, the police forces have tended to adopt technologies with little awareness or civilian oversight,” said Parsons.

Email: [email protected]

Twitter: @RG_Reporter

Tel: 647-998-3514

Submit a correction about this story